HackBytes is a hackathon jointly sponsored by Loblaws Digital and Microsoft. The goal was to build something that used computer vision in a retail environment. Right away the creative juices started flowing, and some incredible concepts emerged from this 24-hour coding spree.

Team BlackBerry once again dominated with a strong showing of the capabilities of BlackBerry Athoc. Our demo took a 3-pronged, microservices-based approach to enhancing the capabilities of security cameras in a retail space. The project, titled ‘Sight for Store Eyes’ accomplished three things:

1. Real-time detection of blacklisted individuals with Azure computer vision, followed by an automatic alert to security teams at the store.

2. Real-time detection of a customer showing the peace sign to a camera, broadcasting an alert to customer service staff to let them know a customer in aisle ‘x’ needs help.

3. Analytics on the data collected from these AI driven alerts.

In the United States alone, retailers lose $50 billion a year due to theft. A big chunk of this loss is often due to a small handful of repeat offenders. To address this problem, our team built a system to detect blacklisted individuals, and then alert security staff to their presence. We leveraged the Azure FACE API and BlackBerry Athoc to bring the idea to life. An iOS app was used to simulate a security camera feed, and then the FACE API was used to both train an AI model and detect criminals as they were entering the store. When a blacklisted individual was detected, the iOS app sent a request to the team’s Athoc server, which sent an SMS to the store’s security team, with the identity of the individual in the payload of the text.

Next, our team looked inward to solve a personal problem, “how many times have I needed help in a big grocery store, but couldn’t find anyone?” Rather than hire more staff, we leveraged computer vision and AI to detect customers in need. In our project, a custom model to detect ‘peace signs’ from customers was trained using Azure’s CustomVision API. Once the model could accurately detect customers showing a peace sign to the security camera, we exported the model to CoreML, for use on our simulated iOS security camera. Then, when our model detected a customer requesting help, the iOS app sent a request to our Athoc Server, which then sent an SMS to customer service staff saying, ‘A customer has requested help in aisle x’. The tool has broad applications in the retail sector and can be used to dramatically enhance the customer service experience.

Finally, our team took this epic AI driven alert system and created a web analytics dashboard. From the dashboard, upper management can gain insight into things like what aisle customers are having the most questions in, and what locations repeat offenders are targeting.

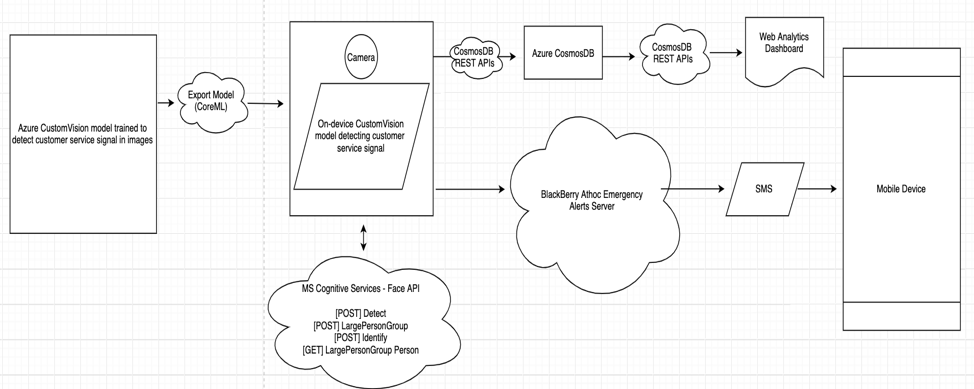

For a high-level view of our architecture, check out the image below: